Visual Informative Part Attention Framework for Transformer-based Referring Image Segmentation

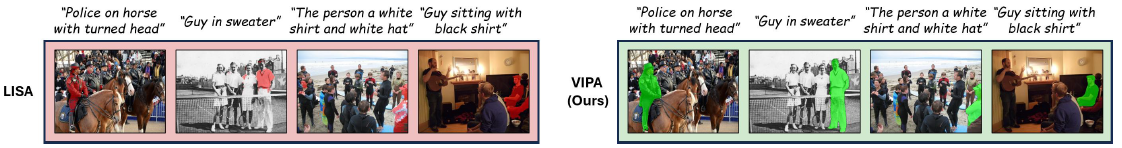

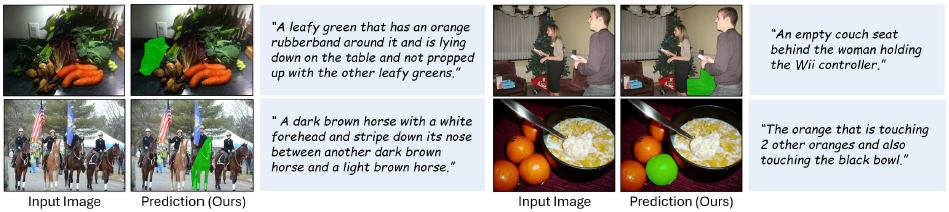

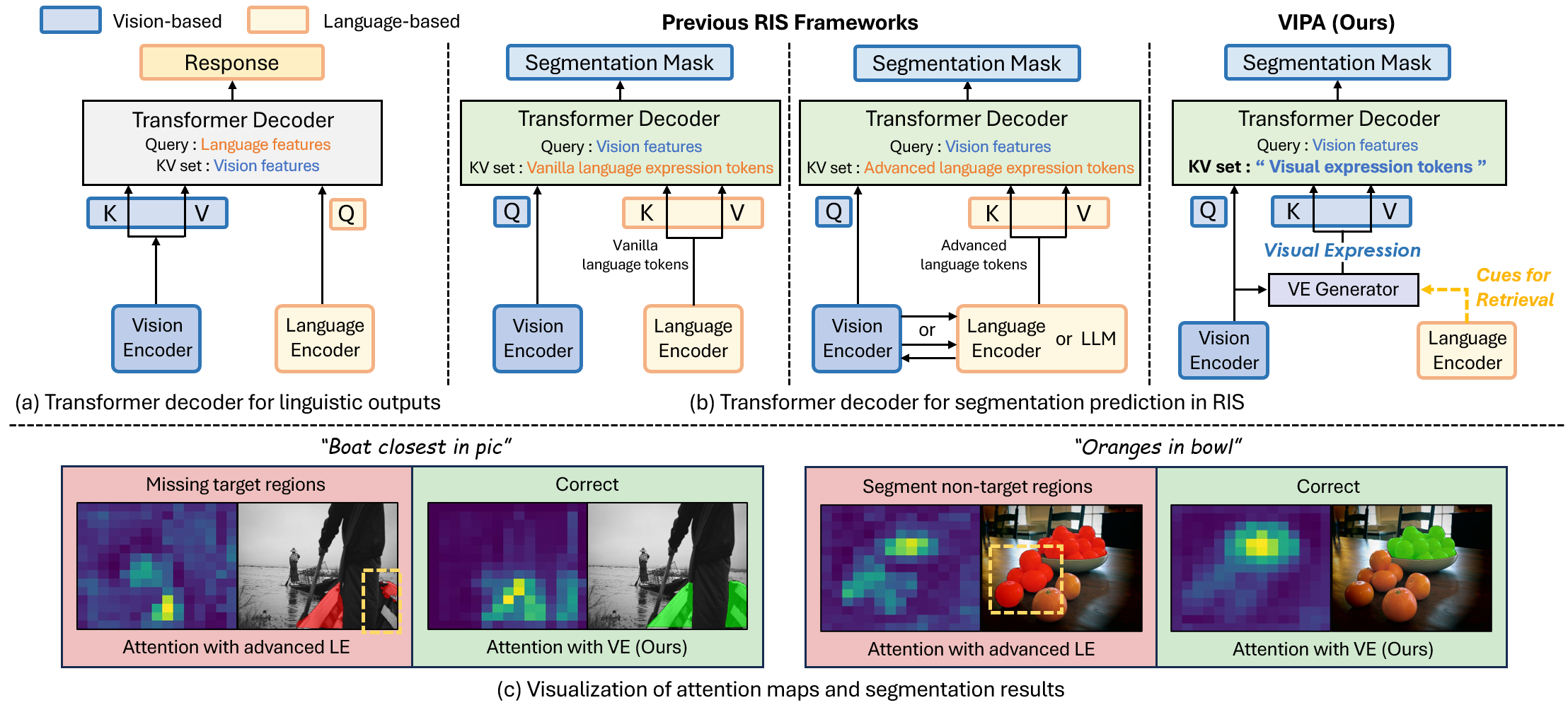

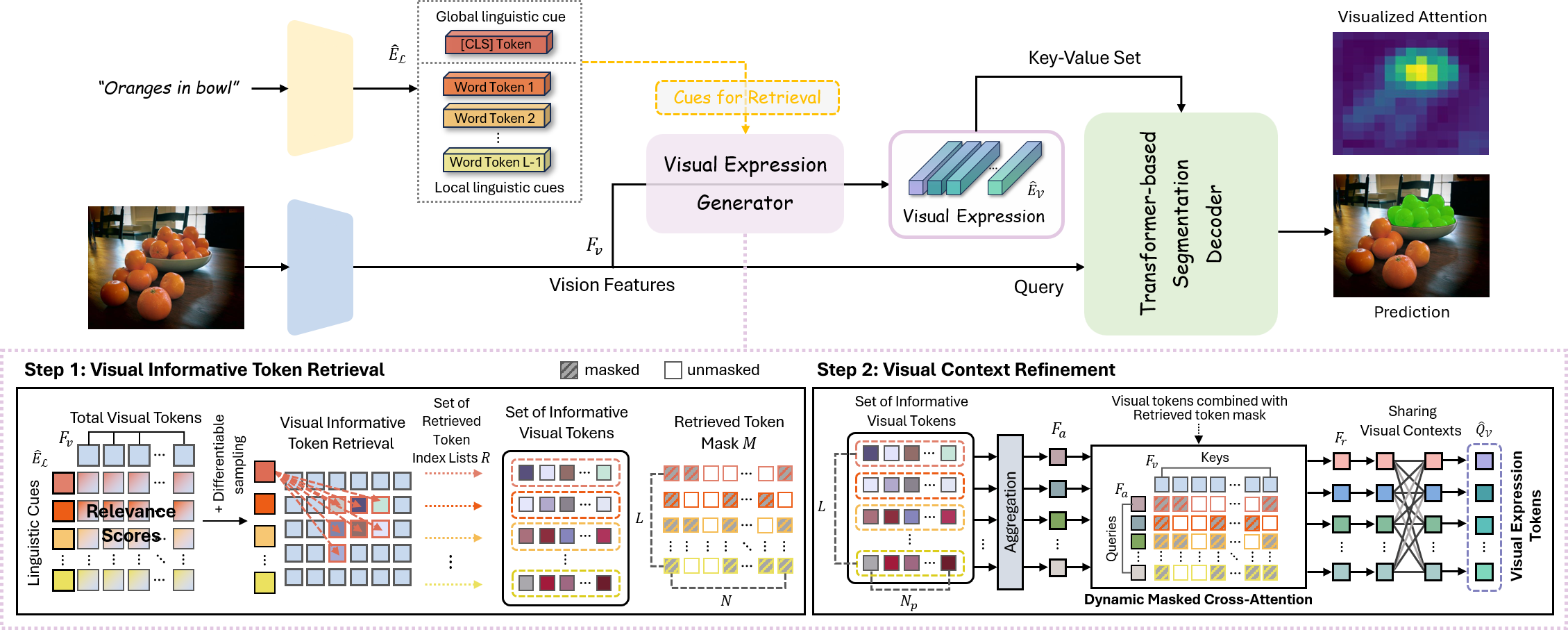

Referring Image Segmentation (RIS) aims to segment a target object described by a natural language expression. Existing methods have evolved by leveraging the vision information into the language tokens. To more effectively exploit visual contexts for fine-grained segmentation, we propose a novel Visual Informative Part Attention (VIPA) framework for referring image segmentation. VIPA leverages the informative parts of visual contexts, called a visual expression, which can effectively provide the structural and semantic visual target information to the network. We also design a visual expression generator (VEG) module, which retrieves informative visual tokens via local-global linguistic context cues and refines the retrieved tokens for reducing noise information and sharing informative visual attributes. This module allows the visual expression to consider comprehensive contexts and capture semantic visual contexts of informative regions. In this way, our framework enables the network's attention to robustly align with the fine-grained regions of interest. Extensive experiments and visual analysis demonstrate the effectiveness of our approach. Our VIPA outperforms the existing state-of-the-art methods on four public RIS benchmarks.

1. We propose a novel Visual Informative Part Attention (VIPA) framework for referring image segmentation, which leverages the informative parts of visual contexts as a key-value set for the vision query features in the Transformer-based segmentation decoder. Our approach is the first to explore the potential of visual expression in the attention mechanism of the referring segmentation.

2. We present a visual expression generator module, which retrieves informative visual tokens via local-global linguistic cues and refines them for mitigating the distraction by noise information and sharing the visual attributes. This module enables visual expression to consider comprehensive contexts and capture semantic visual contexts for fine-grained segmentation.

3. Our method consistently shows strong performance on four public RIS benchmarks. The visual analysis of attention results clearly demonstrates the effectiveness of our framework for referring image segmentation.

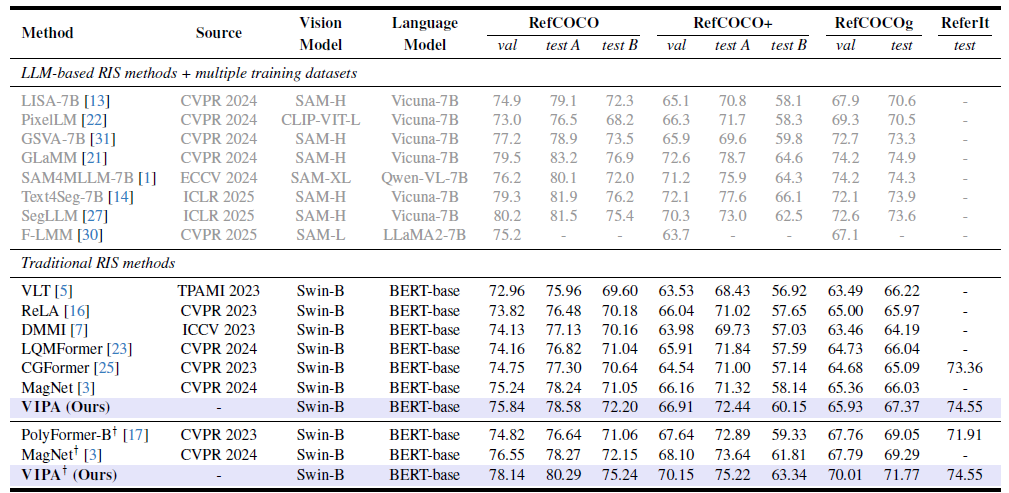

Table 1. Performance comparison with the state-of-the-art methods on four public referring image segmentation datasets. LLM-based models are marked in gray. † indicates models trained on multiple RefCOCO series datasets with removed validation and testing images to prevent data leakage. For ReferIt dataset, only ReferIt training set is used.

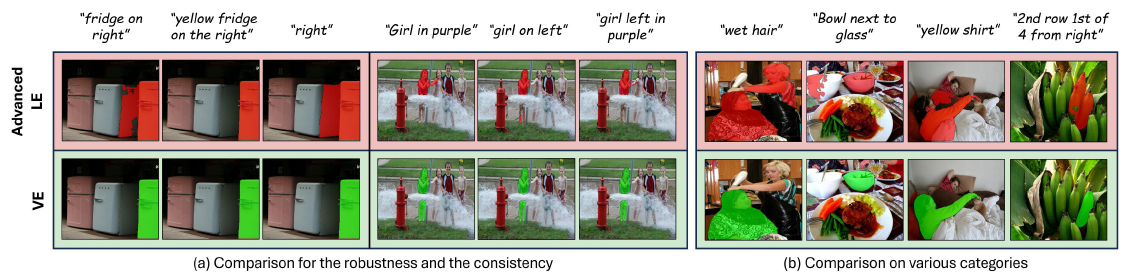

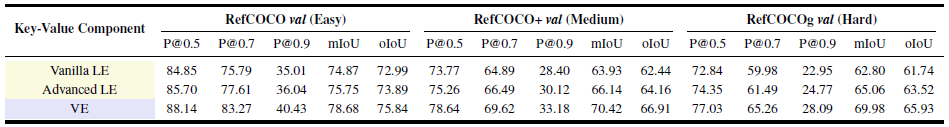

Table 2. Main ablation for the effectiveness of our method. LE: Linguistic Expression tokens. VE: Visual Expression tokens (Ours).

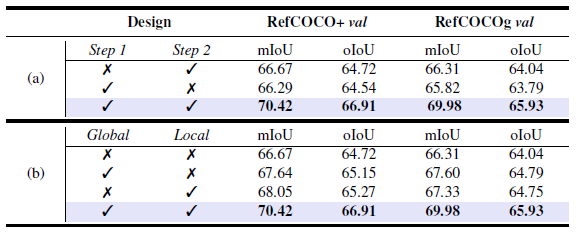

Table 3. Ablation studies for the design of the visual expression generator module.

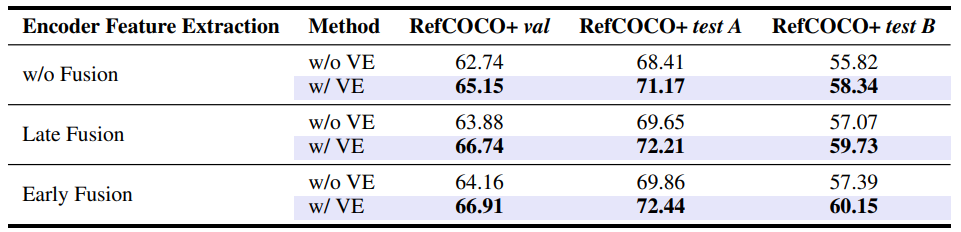

Table 4. Ablation for different encoder fusion types to verify the versatility of our method.

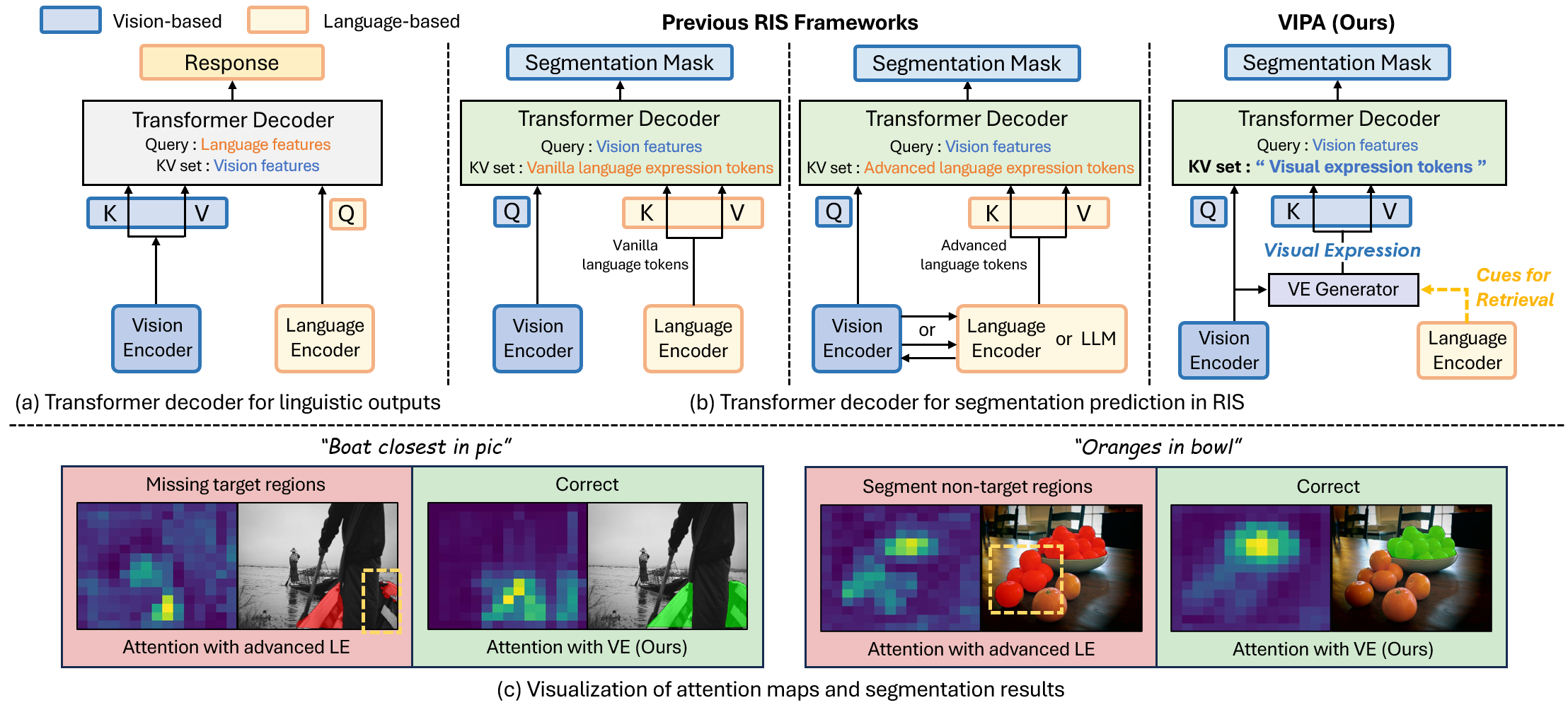

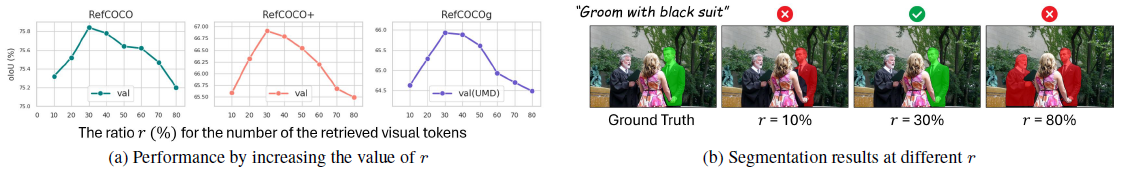

Figure 3. Ablation study on the number of the retrieved informative visual tokens.